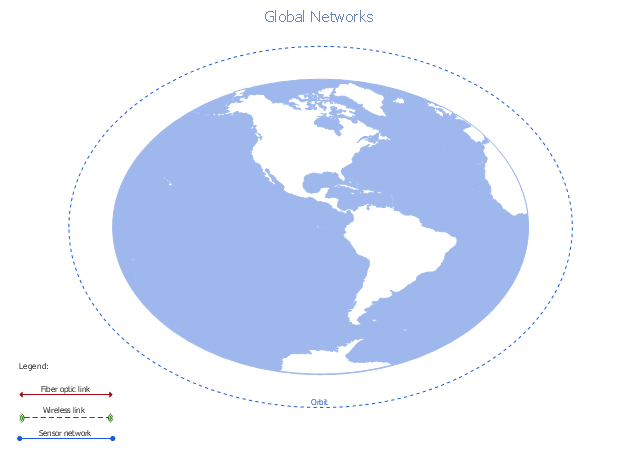

A conceptual diagram of a global vehicular network describes the principles of communication between vehicles, earth-based nodes, and space-based nodes in a wireless network. It illustrates the concept of architecture within global vehicular networks.

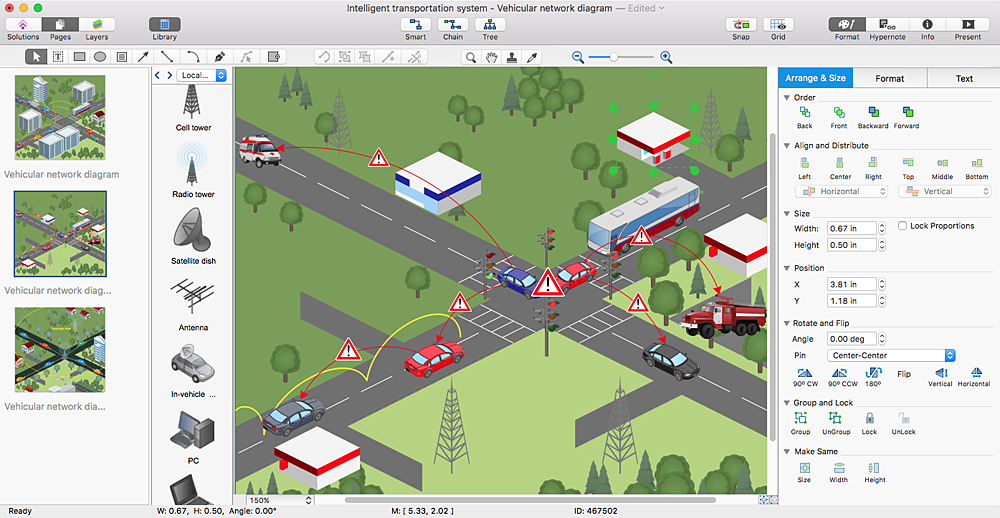

"Intelligent transportation systems (ITS) are advanced applications which, without embodying intelligence as such, aim to provide innovative services relating to different modes of transport and traffic management and enable various users to be better informed and make safer, more coordinated, and 'smarter' use of transport networks.

...

Intelligent transport systems vary in technologies applied, from basic management systems such as car navigation; traffic signal control systems; container management systems; variable message signs; automatic number plate recognition or speed cameras to monitor applications, such as security CCTV systems; and to more advanced applications that integrate live data and feedback from a number of other sources, such as parking guidance and information systems; weather information; bridge deicing systems; and the like." [Intelligent transportation system. Wikipedia]

The global vehicular network diagram template is included in the Vehicular Networking solution from the Computer and Networks area of ConceptDraw Solution Park.

"Intelligent transportation systems (ITS) are advanced applications which, without embodying intelligence as such, aim to provide innovative services relating to different modes of transport and traffic management and enable various users to be better informed and make safer, more coordinated, and 'smarter' use of transport networks.

...

Intelligent transport systems vary in technologies applied, from basic management systems such as car navigation; traffic signal control systems; container management systems; variable message signs; automatic number plate recognition or speed cameras to monitor applications, such as security CCTV systems; and to more advanced applications that integrate live data and feedback from a number of other sources, such as parking guidance and information systems; weather information; bridge deicing systems; and the like." [Intelligent transportation system. Wikipedia]

The global vehicular network diagram template is included in the Vehicular Networking solution from the Computer and Networks area of ConceptDraw Solution Park.

HelpDesk

How to Create a Vehicular Network Diagram

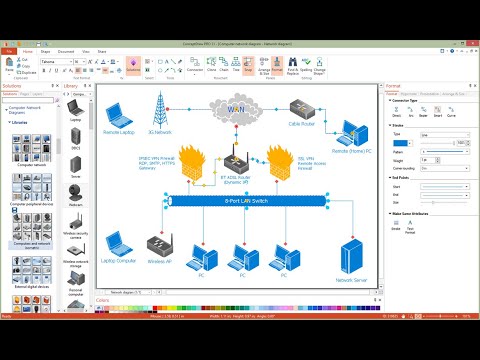

ConceptDraw Vehicular Networking solution can help network engineers, network architects to design, analyze and present vehicular network diagrams quickly and efficiently. Solution provides a possibility to swiftly develop conceptual diagrams for vehicular networking. A set of templates and objects delivered with this solution allows making conceptual diagrams of global and local vehicular networks. Using the Vehicular Networking solution makes much easier the work on documenting the Vehicular Networks.

Telecommunication Network Diagrams

Telecommunication Network Diagrams

Telecommunication Network Diagrams solution extends ConceptDraw PRO software with samples, templates, and great collection of vector stencils to help the specialists in a field of networks and telecommunications, as well as other users to create Computer systems networking and Telecommunication network diagrams for various fields, to organize the work of call centers, to design the GPRS networks and GPS navigational systems, mobile, satellite and hybrid communication networks, to construct the mobile TV networks and wireless broadband networks.

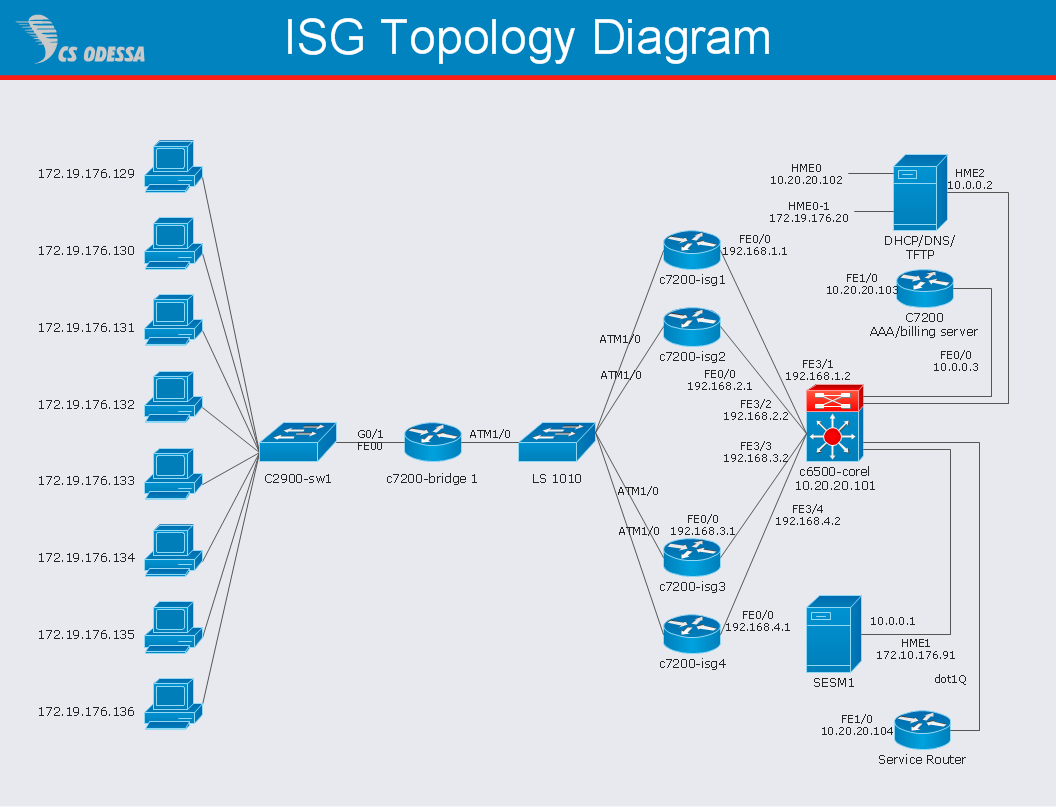

Network Diagram Software ISG Network Diagram

Drawing ISG Network Diagram using ConceptDraw PRO stencils

Vehicular Networking

Vehicular Networking

Network engineering is an extensive area with wide range of applications. Depending to the field of application, network engineers design and realize small networks or complex networks, which cover wide territories. In latter case will be ideal recourse to specialized drawing software, such as ConceptDraw PRO.

- Intelligent transportation system | Global vehicular network diagram ...

- Global Network Diagrams

- Global vehicular network diagram template | Network Topologies ...

- Cisco Network Templates | Network Diagram Examples | Local area ...

- Global vehicular network diagram template | Cctv Fiber Optic Diagram

- How to Create a Vehicular Network Diagram | Global vehicular ...

- Fiber Optics Network Diagram

- Global vehicular network diagram template | Vehicular Networking ...

- Cisco Network Templates | Network Diagram Template | Logical ...

- Intelligent transportation system | Global vehicular network diagram ...

- Inter-vehicle communication systems | Global vehicular network ...

- Cisco Network Templates | Draw Network Diagram based on ...

- Global vehicular network diagram template | Wireless broadband ...

- Mobile Network Diagram Template

- Transport Network

- Network Layout Template

- Network Diagram Template | Network Diagram Software LAN ...

- Vehicular Networking | Hybrid Network Topology | Tree Network ...

- Network Diagram Examples | Wide area network (WAN) topology ...